News

June 2020

June 15, 2020

The deep history of anthropogenic climate change

by: Charles Stanish, Executive Director, Institute for the Advanced Study of Culture

and the Environemnt, USF

The Anthropocene is a new term to denote the period in history when people altered

the landscape and ecology of the earth enough to change global climate (Lewis and

Maslin, 2015). When and how these alterations occurred is key to understanding this

climate change phenomenon. Ancient data from the sciences of archaeology and paleoclimatology

provide a baseline of natural climate variability prior to any significant human impacts

that we can use to guide us on how far the planet is from its natural state and what

adaptive measures would benefit society most.

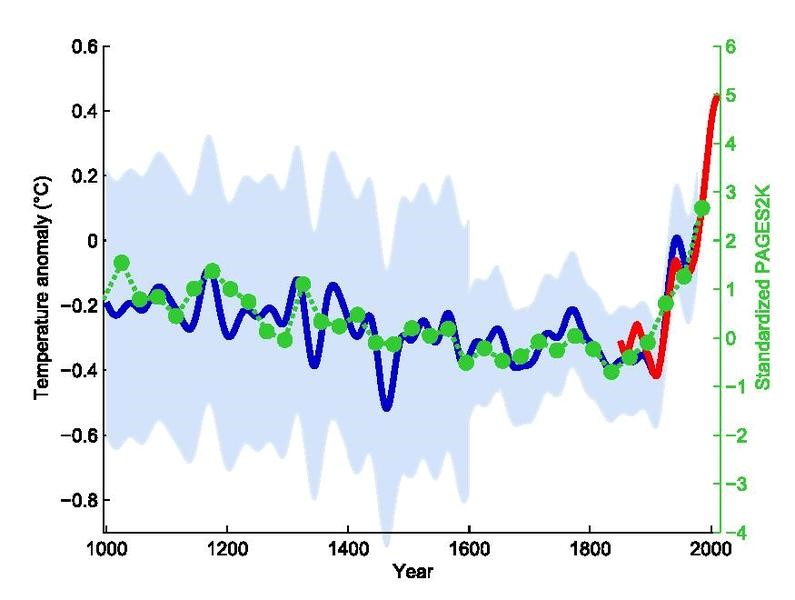

Temperature anomalies in the Northern Hemisphere compared to the 1961 to 1990 average. Data are from instrument measurements (red); from tree rings, corals, ice cores, and historical records (blue); and a 30-year average from pollen (green dots). The light blue is the uncertainty range. Credit: Klaus Bittermann Wikimedia (CC BY-SA 4.0)

The ancient data indicate that, indeed, global climate change is natural. However, this research also shows that the rate of climate change, in this case global warming, has spiked considerably in the last 200 years. This research also shows us that the warming spike that we are currently experiencing is unidirectional unlike earlier warming and cooling periods that were episodic in nature. This back and forth process seems to have stalled, and the earth is getting increasingly warmer over time.

When the Anthropocene begins is a source of debate in the scientific literature. Some people point to a very recent start date, as late as the 20th century. Others suggest that it begins with the industrial revolution in the late 18th century, while some archaeologists and paleoclimatologists argue that it occurred much earlier, about 8000 years ago at least.

We know that a spike in the populations of late Pleistocene hunters and gatherers is correlated with the loss of large animals (megafauna) around 13,800 years ago (Smith and Zeder 2013). Whether these two events are merely correlative or are causal is a source of some contention. Some researchers suggest that the first major global change caused by humans is the extinction of the large mammoths, ancestral elephants, bears and so forth that lived in the Americas and many other regions in the world prior to 11,000 years ago.

There is little doubt that the advent of agriculture around 8000 years ago humans affected local and regional climates. Over the millennia, starting in the Eastern Hemisphere in Mesopotamia and China, farmers progressively cleared forests and converted swamps into wet rice growing zones. Farming began in the Americas a couple of millennia later. Neotropical agriculture involved substantial burning of forests to clear areas for food production. These agricultural practices released carbon dioxide and methane greenhouse gases into the atmosphere, albeit at a much slower pace than today.

By 5000 years ago, people in the circum-Mediterranean and China mined copper ores and other minerals. Archaeologists have tracked air and water pollution in these regions with fairly high degrees of precision (Nocete et al. 2005). Mining and smelting require large furnaces which promoted increasing deforestation for fuel (Kaufman and Scott 2015). A similar process is seen in the African Iron Age in which large areas were deforested and local environments were polluted (Kusimba 1996; Schmidt and Childs 1995). Ice cores from Greenland from 5000 years before present indicate a gradual increase in methane (Ruddiman and Thomson 2001). As Smith and Zeder (2013) tell us, “well before the industrial era, human societies had begun to have a detectable influence on the earth’s atmosphere.”

Africa lost significant areas of rainforest beginning around 3000 years ago (Maley 2002). Rainforests started to shrink in size in the Maya areas of Guatemala and Mexico by 2000 years ago (Rosenmeier et al. 2002). The Inca, Aztec, and Maya civilizations substantially altered their landscapes to create sophisticated gardens, terraces, agricultural fields and swamp gardens. These activities are measurable in lakes and bog sediments, as well as in archaeological contexts such as ancient hearths and garbage dumps.

Agricultural activities were accompanied by severe soil erosion and local losses of plant and animal species. Many of the landscapes that we see as “natural” in the recent past were in fact the product of millennia of indigenous people skillfully altering their physical environment to suit economic, social, and ritual needs. The Amazon forest, for instance, is best viewed in 1492 not as a pristine paradise, but a giant garden in which certain kinds of plants and animals were encouraged to thrive while others were selected out (Heckenberger et al. 2003). As Iriarte et al. (2012) tell us, research indicates a “surge in anthropogenic burning attributed to pre-Columbian agricultural intensification, both through a more intensive practice of slash-and-burn agriculture and through a more sedentary type of agriculture that led to the formation of charcoal-rich, dark-earth soils.”

It was not, however, until the Industrial Revolution in the western world that CO2 emissions spiked beyond anything seen in the ancient past. At this time, societies started to mine fossilized carbon—coal, oil, and natural gas—that had been accumulating in the ground for tens of millions of years. Over a short 200 years, we have released into the atmosphere billions of tons of formerly-trapped carbon. This spike is continuing to the present and is without virtually any doubt the cause of our increasingly warmer climates.

The lesson from archaeology is that for 10,000 years, humans have used their landscapes to grow food and build their villages and cities. However, we reached a tipping point in the 20th century when population densities became unsustainable. For example, people in the Amazon or Africa could cut down sections of rainforest, but, because they would move away after using it, the forest would grow back. This was sustainable and did not threaten widespread deforestation. As the cycle of cutting and moving became shorter and shorter, a point was reached in which the forest could not recover. We unintentionally shifted from sustainable to unsustainable agriculture due to the sheer density of people using more resources per year than could grow back. The same is true for carbon emissions. We have reached a tipping point in which warming accelerates faster and faster, feeding on the previous warming conditions.

Humans are the most successful mammal on the planet. As the astrophysicist Adam Frank wisely tells us, “...climate change is the dire but unintended result of our species’ thriving.” Civilization and the benefits that it incurs likewise come with costs. It is precisely our success that has altered the global ecosystem. It is now time, for us and future generations, to learn from the past and take the steps necessary to create a sustainable world. We got ourselves into this problem, and we can get ourselves out of it by instituting rational and fair policies to reduce our carbon footprint. As Frank says, “Once humans recognize that triggering climate change was an inevitable consequence of a civilizational project we began 10,000 years ago, it follows that combating climate change, too, must also be a collective process, requiring all the ingenuity our species can muster”.