Our Research Lab

Spatial Hearing - Attention and Hearing Devices

At the core of speech communication in complex scenes is the ability to selectively attend to relevant sounds while maintaining the possibility of switching attention to other sounds in a mixture. This complex process requires: 1) forming of distinct auditory objects; 2) segregation between foreground and background; 3) top-down modulatory effects of attention; 4) streaming over time; and 5) continued object maintenance for future behavioral needs.

At the neural level, auditory streams develop along a hierarchical pathway: primitive acoustic features are encoded as early as the auditory brainstem and continually refined up to the primary auditory cortex. From there, perceptual-feature representation and ultimately object- and category-specific representations are formed along the ventral cortical pathway. Because only some signals are relevant to a listener at any one time, and neural resources are limited, selective attention preferentially highlights objects of interest by modulating the representation of relevant and/or irrelevant features. With hearing loss, object formation and subsequent auditory streaming, is weakened by declines in temporal fine-structure, spectral, and spectro-temporal coding. Perceptual features are also poorly encoded. For example, hearing-impaired listeners benefit less than normal-hearing listeners do from spatially separated talkers, likely due, in part, to poor integration of spatial cues and poor selective attention to space.

We have recently been focused on measuring a form of neuro-modulation in younger and older listeners using electroencephalography (EEG; Eddins et al., 2018; Ozmeral et al., 2021), in which listeners either passively listen or actively respond to stimuli at an attended location. The EEG responses are characterized by deflections at ~100 ms and ~200 ms (i.e., N1 and P2) following a spatial change, and in directed-attention conditions, also by a late-latency deflection (~500 ms; i.e., P3). Importantly, the P3 response, which marks a higher-order process related to the sound’s spatial location, is significantly larger when the stimulus arrives at the attended location and absent when attending elsewhere. These data are interpreted as evidence for “sensory gain control” of a perceptual-feature (i.e., spatial location), and we ultimately will leverage this form of neuro-modulation to assess object formation with and without hearing aids.

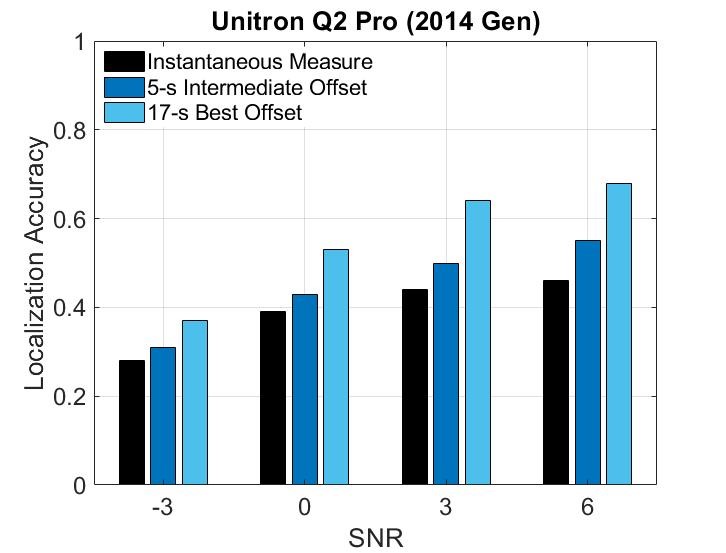

If you were to design a hearing aid to improve speech segregation in complex scenes, then you would pursue strategies that actively promote object formation and selective attention. Existing technologies may indeed accomplish this, but to date, there are no direct measures of the aided effects on auditory streaming. Modern hearing aids are equipped with directional microphones to achieve spatial gain reduction, the process which preferentially highlights a spatial location, and has been shown to increase SNRs for spatially separated signals. Benefit from hearing-aid directional processing is typically gauged by self-report, listening effort, or sentence recognition in noise. However, this traditional approach has important limitations: 1) studies lack systematic control of such processing because of manufacturer-placed barriers, and 2) data provide only indirect measures of the mechanisms responsible for the presumed advantages. In contrast, we link controlled hearing-aid directional processing evaluation to a strong and established theoretical model of auditory object-based attention. In doing so, we can directly associate benefits with relevant neural mechanisms, and we can more precisely target innovation efforts for future hearing aid technology.