Bellini College of Artificial Intelligence, Cybersecurity and Computing

A College for the Future

The Bellini College of Artificial Intelligence, Cybersecurity and Computing is the first of its kind in Florida and one of the pioneers in the nation to bring together the disciplines of artificial intelligence, cybersecurity, and computing into a dedicated college. We aim to position Florida as a global leader and economic engine in AI, cybersecurity, and computing education and research. We foster interdisciplinary innovation and ethical technology development through strong industry and government partnerships.

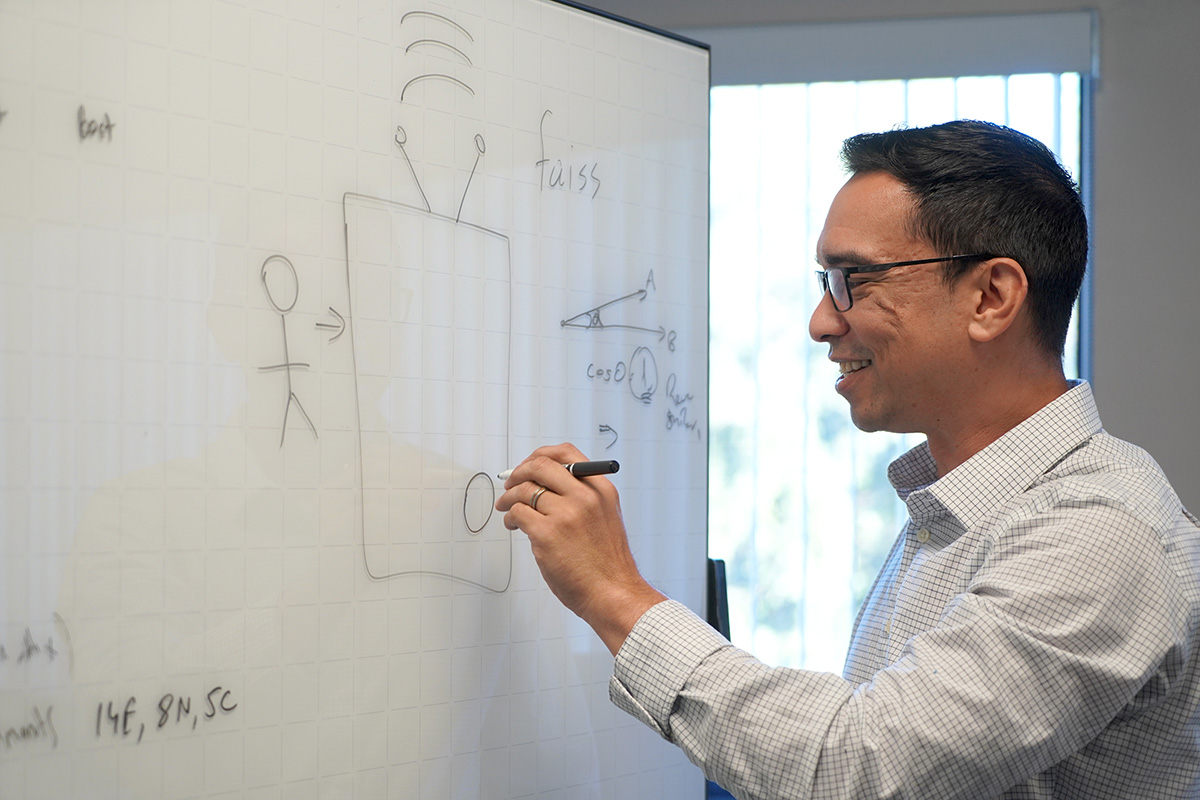

Our Faculty are Pioneering the Future

John Licato's interest in video games led to a passion for technology, and he has since dedicated his education, research, and career to the field. As an associate professor in the new Bellini College of Artificial Intelligence, Cybersecurity and Computing this fall, he will share his passion with students and teach the future AI and cybersecurity leaders of tomorrow.